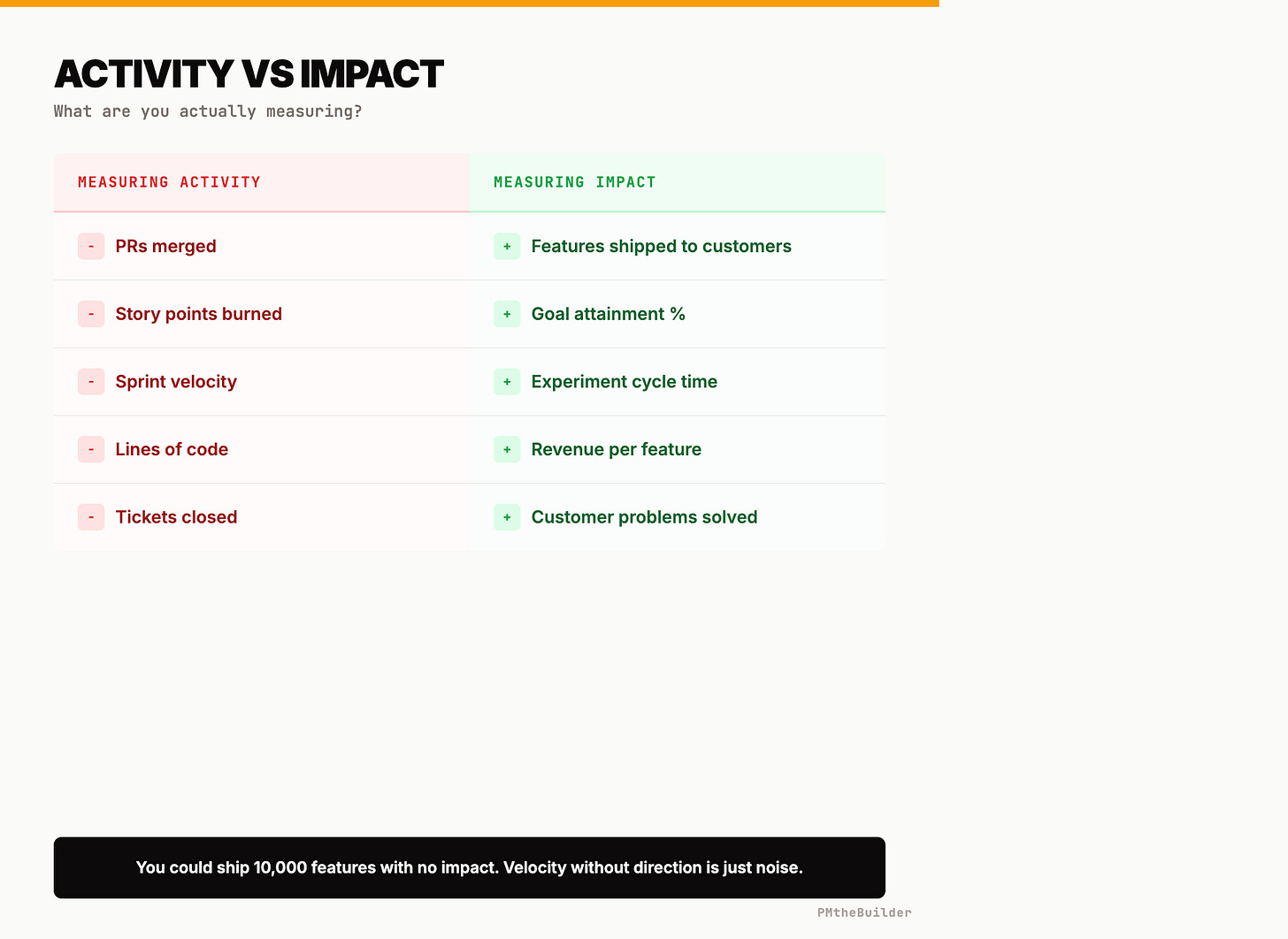

Velocity by Features, Not PRs: The Measurement Shift Every AI PM Team Needs

A 40-person product engineering team adopted AI coding agents in January. By March, their dashboards looked incredible: PRs merged per week went from 120 to 380. Sprint velocity nearly tripled. Deployment frequency doubled. Leadership celebrated at the all-hands.

Then someone asked the obvious question: “Did any of this move our product metrics?” Monthly active usage: flat. Feature adoption: flat. Revenue per user: actually down 0.3%.

The team had 3× more code shipping and negative business impact. They weren’t moving faster. They were generating waste faster — and every metric they tracked told them they were crushing it.

This is the Volume Trap, and it’s hitting every AI-augmented team that hasn’t updated their measurement system. Here’s the complete replacement framework, with the exact templates, GitHub Actions enforcement, and dashboard setup to implement by next Friday.

Step 1: Replace PR Metrics With Effective Velocity Rate (EVR)

EVR = Features hitting their success threshold ÷ Total features shipped.

That same 40-person team implemented EVR and discovered their baseline: only 22% of shipped features actually hit their target metric. After restructuring around EVR, they cut total feature output by 35% and raised their hit rate to 48% within one quarter — meaning more business impact from fewer shipped features.

The mechanism: require a Feature Impact Brief before any work starts:

feature: Social proof on pricing page

hypothesis: Customer logos increase checkout trust

target_metric: pricing_to_checkout_rate

baseline: "4.2% current conversion"

success_threshold: "+2pp (to 6.2%) within 30 days"

measurement: amplitude_cohort_comparison

eval_date: 2026-04-17

automation_tier: 2 # 1=auto-merge, 2=one review, 3=full approval

No brief = no build. Enforce this with a GitHub Actions check:

# .github/workflows/experiment-gate.yml

name: Experiment Brief Check

on: pull_request

jobs:

check:

runs-on: ubuntu-latest

steps:

- name: Verify experiment link

run: |

BODY="${{ github.event.pull_request.body }}"

if ! echo "$BODY" | grep -qE '\[exp-[0-9]+\]'; then

echo "❌ PR must link to experiment ID"

echo "Add [exp-XX] to PR description"

exit 1

fi

This single gate stopped the Volume Trap for that team. Agents still build fast — but they only build things that have a hypothesis and a measurement plan.

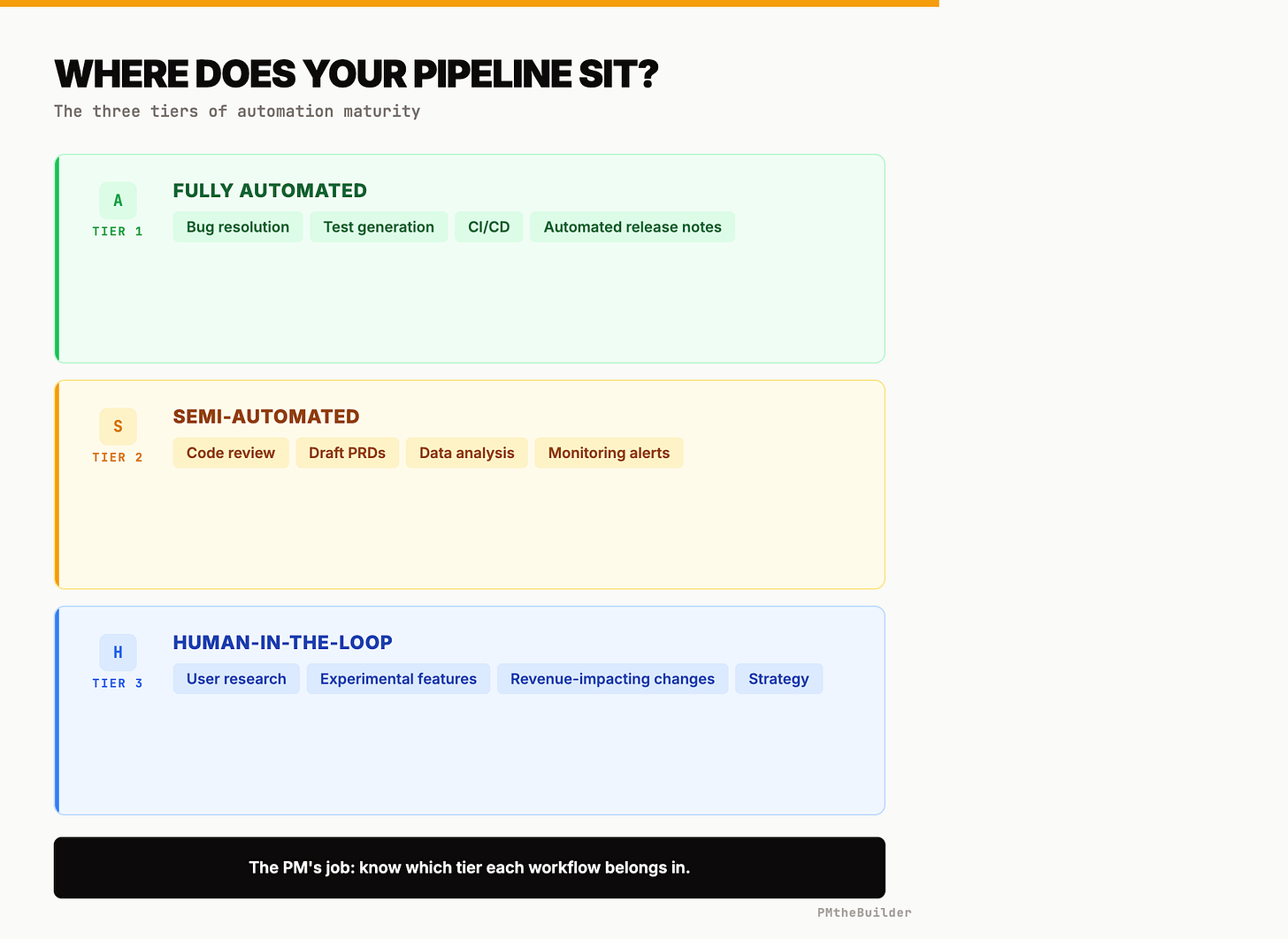

Step 2: Three Automation Tiers (with CI enforcement)

Not everything needs the same oversight. Map every project to a tier:

Tier 1 — Auto-merge (no human): Bug fixes with green tests, documentation, dependency bumps, logging, test coverage. These changes don’t affect user behavior. Configure branch protection with required status checks only — no reviewer requirement. Add an automerge label workflow. ~40-50% of agent output falls here.

Tier 2 — One reviewer (5-min check): UI tweaks, A/B test variants, copy changes, non-revenue feature work, performance optimizations. These affect user experience but not revenue or data integrity. Branch protection requires 1 review. Use CODEOWNERS to auto-assign the right reviewer. ~30-40% of output.

Tier 3 — Two reviewers + rollout plan: Pricing changes, checkout flow, authentication, data/privacy changes, infrastructure migrations, experiments on pages with 10K+ daily visitors. Could cost money, lose data, or break user trust. Branch protection requires 2 reviews. Enforce a rollout plan section:

- name: Tier 3 deploy plan check

if: contains(github.event.pull_request.labels.*.name, 'tier-3')

run: |

BODY="${{ github.event.pull_request.body }}"

if ! echo "$BODY" | grep -q "## Rollout Plan"; then

echo "❌ Tier 3 requires a Rollout Plan section"

exit 1

fi

~10-20% of changes, but 80%+ of risk.

Step 3: Replace Story Points With Experiments

“We don’t understand how anyone can forecast how long it will take an agent to build something.”

Correct. Story points assumed predictable human implementation speed. With agents, a 13-point feature might take 45 minutes; a 3-point task might stall for two days. The variance kills estimation entirely.

The fix: experiments as the planning unit.

Old planning: “This is 8 story points. Sprint 14. Ship April 3.”

New planning: “Experiment #47. Hypothesis: social proof increases pricing conversion 15%. Agent builds in ~2hrs. Evaluation: 14 days. Decision date: April 17. If positive → Exp #48 (full testimonial system).”

Stop estimating build time (agents make it unpredictable). Start estimating learning time — the evaluation period. “We can run 8 experiments this sprint with adequate traffic allocation” is a fundamentally better planning unit than “we have 42 story points of capacity.”

Force every backlog item through the experiment frame: state the hypothesis, the success metric, the evaluation period. If you can’t, the item isn’t ready. Teams consistently find that 40-60% of their backlog items can’t survive this filter — and every one of those items would have been built, shipped, and counted as “velocity” while moving nothing.

Step 4: Build the Measurement Stack

Layer 1 — Semantic Classification: Tag every commit to its experiment. Start simple: commit prefixes like [exp-47] Add social proof component. Graduate to a post-merge webhook that sends the diff to an LLM classifier mapping it to your metrics tree.

Layer 2 — The Metrics Tree: Build a hierarchy from goals to experiments:

Revenue Growth (goal)

→ Conversion Rate (metric)

→ Pricing Redesign (feature)

→ Exp #47: Social Proof [baseline 4.2%, target 6.2%]

→ Exp #48: Testimonials [baseline 4.2%, target 5.5%]

→ Checkout Optimization (feature)

→ Exp #51: One-Click [baseline 67%, target 75%]

→ Retention (metric)

→ Onboarding Redesign (feature)

→ Exp #61: Interactive Tutorial [baseline 23% D7, target 28%]

Every classified commit links to a node. Your Git history becomes a measurement system answering: “What percentage of Q2 effort went to retention vs. monetization?”

Layer 3 — Three-Number Dashboard:

Metric Measures Healthy range Experiments running Sprint workload 6-12 per team Experiments evaluated Learning throughput 80%+ completing eval EVR Impact efficiency 40%+ hitting threshold

Three numbers replace 20 charts of PR analytics. If these trend right, your team is generating real velocity — not the performative kind.

Tooling: Linear or Notion for metrics tree + GitHub Actions for commit classification + Amplitude/Mixpanel for automated experiment evaluation. One engineer, one sprint for the basic version. Two engineers, two sprints for LLM-enhanced classification.

Step 5: The Acceleration Trap Diagnostic

Teams are baking 25-40% AI speed gains into Q2. Roadmaps are denser. Stakeholder expectations are higher.

The question nobody’s asking: acceleration of what?

Run this on your Q2 roadmap right now: for every committed feature, can you state (a) the hypothesis, (b) the target metric with baseline, and (c) the success threshold? Features missing any of those three are unmetered acceleration — you’re spending agent compute on work you can’t evaluate.

The team that ran this diagnostic found only 35% of their Q2 roadmap had defined success metrics. They restructured: cut features without metrics or reframed them as experiments with explicit hypotheses. Total committed features dropped 30%. Measured impact for the quarter went up. The agents made building cheap; the measurement system ensured cheap work was valuable work.

Step 6: Coordinate With Experiment Owners

The math that breaks everything: 10 engineers × 12 experiments/week × AI agents = 120 production changes weekly. Some touch live revenue. Some conflict with each other.

Task force model: single owners per work stream.

Not committees. Not alignment meetings. One person owns retention experiments. One owns onboarding. One owns monetization. Each owner:

Sets experiment priority (what runs next, what gets killed)

Defines success metrics with baselines and thresholds

Has veto power over what ships in their domain

Reviews results weekly: expand, iterate, or kill

Reports their stream’s EVR

This creates an experiment marketplace. Engineers propose. Owners decide what runs. Agents make implementation cheap. Owners make it coherent.

The alternative — experiment sprawl — is hundreds of micro-changes shipping without enough traffic for statistical significance, actively conflicting with each other, with nobody empowered to say “stop, we have six onboarding variants running and none have enough data.” One ~200-person org that moved to the task force model went from 300+ scattered experiments to 80 focused ones. Their learning rate (statistically significant results per month) doubled. Fewer experiments, more signal.

Tactical Tip: The 5-Minute Velocity Audit

Run this right now. Five questions, five minutes.

1. PR-to-Feature Ratio. Merged PRs this week ÷ distinct user-facing features. Below 1:5 = you’re measuring agent activity, not team productivity.

2. EVR. Features with pre-defined success metrics that hit their target last sprint ÷ total shipped. If you can’t calculate this, that’s your answer — your measurement system is broken.

3. Tier Coverage. Classify each active project: Tier 1 (auto-merge), Tier 2 (one review), Tier 3 (full approval). More than 20% unclassified = guardrail debt accumulating risk daily.

4. Experiment Ownership. Name the single owner for each work stream. “The team decides together” = a coordination gap that compounds as agents accelerate output.

5. Metrics Tree Trace. Pick any active experiment. Trace it: Experiment → Feature → Product Metric → Business Goal. If it takes more than 30 seconds, your measurement infrastructure needs work before you add more speed.

Score yourself:

4-5 answered concretely: You’re ahead of 90% of AI PM teams. Share your system.

2-3 answered: Foundation exists. Build the metrics tree this week.

0-1 answered: Your velocity metrics are lying to you. Start today: build the tree, write one Feature Impact Brief per active project, assign stream owners.

Run this audit every two weeks. Share with your team. Watch the conversation shift from “how much did we ship?” to “what did we learn?”

That’s the complete framework. EVR instead of PRs. Experiments instead of story points. Automation tiers instead of one-size-fits-all review. Metrics trees instead of PR dashboards. Experiment owners instead of alignment meetings.

The measurement system for AI-augmented teams is being written right now — by the teams living through this transition. Forward this to a PM watching their PR count triple while wondering why nothing feels faster.

Next week: How to build an experiment backlog that AI agents can execute autonomously, without human babysitting at every step.

What velocity metric is working for your team? Hit reply and tell us. The best frameworks come from the field, not from theory.

— PMtheBuilder