The “Or Else” Problem

How to Communicate AI Acceleration Without Threatening Your Team

Quick Hits

Fortune 500 companies confirmed production agentic AI deployments at GTC 2026. The question isn’t whether AI agents are coming to your PDLC — it’s whether your team knows what that means for their daily work. If you haven’t had that conversation yet, you’re already behind.

Three US states passed AI transparency laws this month. If your product touches AI (and in 2026, it does), compliance is now a PM responsibility. The teams building review workflows now will save months of scrambling later.

The WEF’s latest workforce report confirms it: creative thinking, resilience, and flexibility remain the most critical skills — even as AI reshapes every function. Tools don’t replace judgment. But try telling that to a team that just heard “your output should double.”

AI PM tooling hit 25+ dedicated products in 2026 — from meeting intelligence to roadmap automation. The ecosystem is mature. The culture isn’t. And that gap is this week’s deep dive.

Deep Dive: The “Or Else” Problem

“When I hear ‘you were expected to do 10 points and now you’re expected to do 20,’ the impact is I hear the silent ‘or else’ — that is not a nice feeling.”

A design leader said this during a cross-functional AI kickoff at a major tech company. Not in a Slack rant. Not in a private 1:1. In a room full of senior leaders — VP of engineering, senior PM leadership, heads of design — during a meeting explicitly designed to align everyone on AI acceleration strategy.

The room went quiet. Because every person in it had either felt that “or else” — or been the one creating it without realizing.

If you’re leading a product team in 2026, you’ve heard some version of this. The “or else” problem is the defining leadership challenge of AI-accelerated product development, and it’s showing up everywhere: in all-hands presentations that land as threats, in OKRs that feel punitive, in hallway conversations where “exciting AI strategy” curdles into “implicit job insecurity.”

Here’s what’s actually going wrong, why it’s going wrong, and a concrete framework to fix it — with specific steps sized for your org.

📖 Key terms used in this piece:

Invariants — Non-negotiable outcomes that must hold true regardless of what process a team uses (e.g., “revenue data accuracy stays above 99.7%”). Your “anchor points.”

Proof story — A specific, numbers-backed example where AI acceleration created undeniable customer or business value. The antidote to the “or else.”

Bubble Model — A framework where each unit of work is sized to its complexity: small bubbles (one person, ships fast) vs. large bubbles (full team, structured process). Different complexity = different process.

Problem #1: The Asymmetry Nobody Acknowledges

The root cause of the “or else” problem isn’t bad intentions. It’s an asymmetry that most leadership teams have created without noticing.

Engineering gets a clear, measurable, motivational north star for AI adoption — something like “90% of code AI-generated by H1.” Love it or hate it, it’s concrete. Engineers know what success looks like. They can track progress weekly. The goal describes a new capability to aspire to.

Product and design get... nothing. No equivalent goal. No measurement criteria. Just a vague expectation to “use AI more” paired with the unspoken assumption that output should roughly double.

Here’s why this asymmetry is corrosive: “90% of code AI-generated” sounds aspirational because it describes what engineers will be able to do. “Do 20 points instead of 10” sounds threatening because it describes what you’re expected to do — with no new tools, no new process, and no clear path to get there.

Same company. Same AI strategy. Two completely different emotional responses. Engineering feels excited. Product and design feel targeted. And the leaders who created this gap usually have no idea it exists.

Problem #2: The Speed Trap

The asymmetry creates resentment. What happens next is worse.

Let’s say AI makes your engineers 2x faster. They’re shipping code at twice the rate. But designers are still in their traditional tools with their traditional timelines. PMs are writing specs the same way they did last year. The collaboration patterns that determine how fast a feature actually ships — handoffs, reviews, feedback loops — haven’t changed.

What happens: engineering ships v2 while design is iterating on v1. PRDs get outdated hours after they’re written. Designers feel steamrolled. PMs feel like air traffic controllers who’ve lost radar. Engineers feel frustrated that nobody can keep up.

A senior engineering leader we observed said it plainly: “You have to throw out your old playbook for how you build code. It doesn’t stop there.” Exactly right — product specs, design workflows, QA processes, and go-to-market playbooks all need to be rethought. Not sped up. Rethought.

Speed without alignment creates more friction, not less. Organizations that treat AI acceleration as a throughput problem find themselves shipping faster into bigger messes.

So the asymmetry creates resentment, and the speed trap creates chaos. How do you fix both? By changing the story you’re telling.

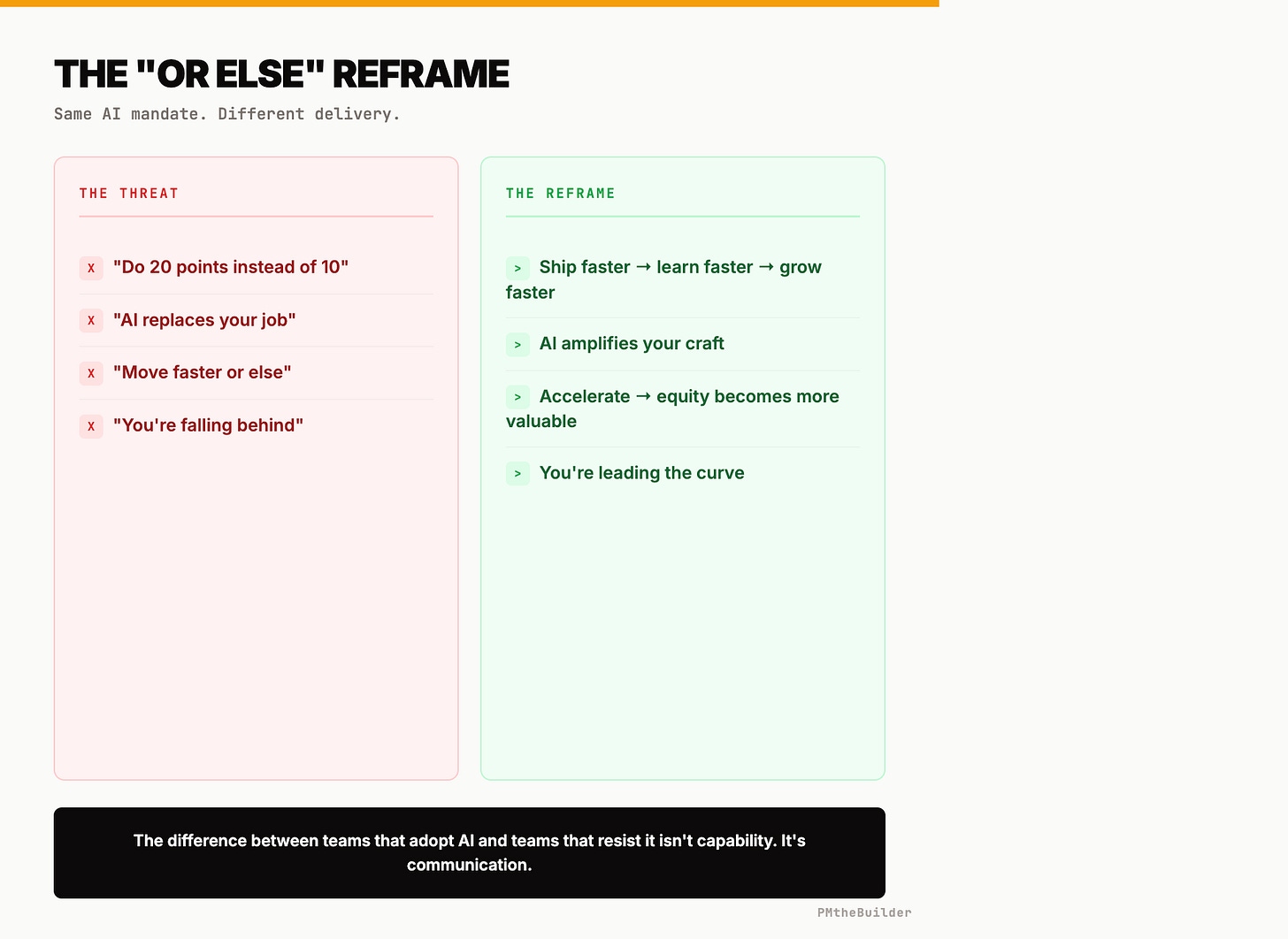

The Reframe: From Stick to Ladder

The frame that fails: “We’re adopting AI so each of you can deliver more output.”

People hear: “Produce more or you’re replaceable.” Every tech layoff in 2025 mentioned AI efficiency. Your team doesn’t give you the benefit of the doubt. They hear the “or else” whether you say it or not.

The frame that works: “Accelerating feature delivery means we learn faster from customers. Learning faster means we scale the right products and kill the wrong ones before wasting months. Scaling means revenue growth. Revenue growth means everyone’s equity becomes more valuable — and we get to invest in the ambitious ideas we’ve been putting off.”

This isn’t spin. It’s a structural reframe:

Stick Frame Ladder Frame “Do more output” “Unlock more leverage” Speed is the goal Learning is the goal AI replaces effort AI elevates judgment Your output isn’t enough Your upside isn’t unlocked yet

Across every team we’ve seen navigate AI acceleration successfully, leaders all do the same thing: they connect the acceleration to personal upside — equity value, career growth, more interesting problems — not organizational demands.

But the reframe only sticks if you have evidence. Which means you need a proof story.

The Proof: 79% Revenue Increase in Two Weeks

We observed a B2B SaaS product team that built a copilot feature — an AI-powered audit tool embedded in their platform. The tool analyzed each customer’s account configuration, identified underutilized capabilities, and generated a prioritized optimization report: specific steps, estimated revenue impact for each change, ordered by effort-to-value.

One of their largest enterprise accounts — a mid-market e-commerce company spending six figures annually — ran the audit. The AI identified three underutilized capabilities: automated audience segmentation, predictive send-time optimization, and an AI-powered content workflow. The report told them exactly what to do, in what order, and what each change was worth.

The customer implemented the top three recommendations. The result: 79% revenue increase in two weeks — driven by AI-generated insights that would have taken months to produce manually.

That number changed the internal conversation overnight. The team didn’t need to be told “do more.” They wanted to know: “What else can we build that does THAT?”

Every team navigating AI acceleration needs at least one proof story like this. Not a theoretical projection. A real result, with real numbers, that your team directly created. Here’s how to find yours:

Ask engineering: “Which AI-accelerated feature has the best customer outcome data?”

Pull the metrics: revenue impact, time-to-value, customer feedback.

Build a 90-second narrative: what you built, what the customer did, what happened.

Lead with this story at every AI-related meeting — before targets, before mandates, before anything else.

If you don’t have a proof story yet, finding one should be your #1 priority.

Guardrails, Not Prescriptions

Now for how work actually gets done. Most AI acceleration strategies fail here by getting prescriptive: “Every PM must use AI for PRDs.” “Design reviews must include AI alternatives.”

These mandates create compliance theater. People run ChatGPT on their PRDs to check a box, not because it helps them think.

What works instead: define invariants and let teams experiment with everything else.

Here are five real invariants we’ve seen work:

Invariant What it protects What it frees up Revenue data accuracy stays above 99.7% Financial integrity Teams choose any AI tool for reporting/dashboards Customer-facing features pass RFC review with 2+ senior engineers Production quality Teams can AI-draft the RFC, prototype the feature however they want All customer data access is logged and auditable Privacy & compliance Teams architect AI features with full design freedom WCAG AA accessibility met on every shipped feature User experience AI can generate 50 design variations — all must be accessible Customer-facing copy reviewed by a human before launch Brand & accuracy AI drafts, refines, and A/B tests — human gives final approval

The power of invariants is what they don’t say. They don’t prescribe tools or mandate processes. A senior PM leader we observed framed it well: “Define the anchor points — the things that absolutely cannot break — and give teams freedom to experiment with everything else.”

The results: some teams went all-in on AI-generated specs. Others used AI for research synthesis. One team built a novel workflow where AI cross-referenced customer feedback tickets against the existing roadmap, saving 15 hours per sprint of manual triage. No approach was prescribed. All delivered value. None violated an invariant.

The Bubble Model: Right-Size Your Process

There’s no singular product development lifecycle anymore. Different complexity demands different process — and AI shifts what’s possible at each level.

How it works: every unit of work is a “bubble” sized to its complexity. Here’s the decision framework:

🫧 Solo Bubble — 1 person, ships in hours to days

Criteria: Bug fix, config change, or enhancement with no cross-team UX impact and no compliance implications.

Example: Customer reports a dashboard alignment bug. One engineer uses AI to identify the cause, generate the fix and test, verify CI passes, and ship to production. Elapsed time: 47 minutes. No PM. No design review. No sprint planning. Invariants (code review, test coverage, accessibility) met by the individual.

🫧🫧 Squad Bubble — 2-4 people, ships in 1-2 weeks

Criteria: New feature or enhancement touching one team, with customer-facing UX changes but no compliance or data migration needs.

Example: Three enterprise prospects want the same reporting feature. PM writes a lightweight brief (AI drafts from sales call transcripts, PM sharpens for 30 minutes). Engineer builds with AI pair programming. Designer reviews UX asynchronously and flags one interaction fix. Ships in eight days.

🫧🫧🫧 Full Bubble — Full cross-functional team, ships in 1-3 months

Criteria: Touches multiple teams, has compliance implications, requires data migration, or affects every user.

Example: Rebuilding the onboarding flow for a new customer segment. Six teams involved. Structured RFC. Design sprints. Legal review. AI accelerates every step but coordination overhead exists because the blast radius is high.

The decision tree:

Is it a bug fix / config change with no UX impact? → Solo

Does it touch multiple teams OR have compliance implications? → Full

Everything else → Squad

The critical mistake: applying Full-bubble process to Solo-bubble work (47-minute fix takes two sprints) or Solo-bubble speed to Full-bubble work (ship fast, break things for your biggest customers). AI shifts work toward smaller bubbles — but the genuinely hard problems still need the Full bubble. They still need craft.

Craft Moved Up the Stack

“You have to throw out your old playbook for how you build code.” That engineering leader was talking about code. The principle applies everywhere.

PRDs: Old playbook = days writing from a blank page. New = AI generates a draft from meeting notes in 20 minutes. You spend your time questioning assumptions, finding the edge case that changes the approach, connecting insights across customer segments. Value moved from writing to thinking.

User research: Old = manually coding 100 transcripts over two weeks. New = AI processes 500 interviews in an hour. You spend time on what AI can’t do — the empathetic leap from “customers say they want speed” to “customers actually need confidence their data is current.” Value moved from processing to interpreting.

Design: Old = three days for three polished options. New = AI generates 50 variations in 30 minutes. You spend time curating — selecting the version that serves the user’s emotional need, catching the accessibility issue, making the typography feel trustworthy. Value moved from production to taste.

The craft hasn’t disappeared. It’s elevated — from execution to judgment, from “can you do this?” to “should we, and what’s the version that creates the most value?” That’s not threatening. It’s the most exciting shift in product work in a decade. But only if your team hears it before they hear the “or else.”

The 6-Part Communication Framework (Sized for Your Org)

Here’s the tactical playbook. Each step builds on the previous.

1. Lead with the proof story. Find your 79% moment and open every AI-related meeting with it — before targets, before mandates.

10-person startup: Founder shares it in a team standup. 5 minutes.

100-person org: VP of Product presents it at the monthly all-hands with customer data. 10 minutes.

1000+ org: Executive sponsors a 2-minute video case study distributed company-wide.

2. Name the asymmetry. Say it out loud: “Engineering has a clear north star for AI. Product and design don’t yet — that’s on us to fix.”

Any size: This sentence works in any forum. What changes is who says it. At a startup, the CEO. At an enterprise, the CPO or CTO — in a joint statement so it doesn’t look like blame-shifting.

3. Co-create function-specific north stars. Run a 90-minute working session to define goals for product and design that feel as concrete as “90% AI-generated code.”

10-person: One session with the whole team. Walk out with 2 goals.

100-person: Session with function leads, then cascade to teams for feedback within one week.

1000+: Pilot with 2-3 teams, refine the goals, then roll out in the next planning cycle.

Goals that work describe liberation, not obligation: “Time-from-insight-to-shipped-experiment drops 60%.” “PMs spend <20% of their week on status updates.” “Design goes from concept to validated prototype in 48 hours.”

4. Publish invariants. Write down 5-7 non-negotiable outcomes. Share them widely. Explicitly state: “Everything not on this list is yours to experiment with.”

If leadership resists: Start with just 3 invariants everyone agrees on. Expand quarterly as trust builds. The point is momentum, not perfection.

5. Implement the Bubble Model. Create a one-page decision tree (Solo / Squad / Full) and post it where teams plan work.

First week: Teams self-select using the framework for new work only. Don’t re-classify work already in flight.

First month: Retro on whether work was correctly sized. Adjust criteria based on edge cases (the most common: “Is this a Squad or Full? It touches one other team but only for an API contract.” Answer: Squad, with the other team as an async reviewer).

6. Replace output metrics with outcome metrics. Retire story points, PRDs-per-quarter, and designs-produced. Replace with:

Revenue per shipped feature

Time-from-customer-insight-to-experiment

Customer-reported impact score

Experiment velocity (learn cycles per quarter)

Share monthly. These metrics reward teams creating value, not teams producing artifacts.

Tactical Tip: The 3-Sentence Reframe

Next time someone frames AI acceleration as “doing more” — in an all-hands, a skip-level, or a 1:1 with an anxious team member — use this:

“The goal isn’t to do more of the same work faster. It’s to eliminate the work that doesn’t require human judgment, so we can spend all our time on the work that does. That’s not a threat to your role — it’s an upgrade.”

Write it down. Say it once out loud so it doesn’t sound rehearsed. Use it every time you hear the “or else” forming. It works because it reframes AI from replacement to elevation — and elevation is the only frame that gets people to adopt rather than resist.

Take the AI PM Eval

Where do you actually stand as an AI Product Manager? Not where you think you stand — where the data says.

We built a free self-assessment that scores your AI PM readiness across the competencies that separate the PMs thriving in this transition from the ones white-knuckling through it. Blunt. Specific. Under 5 minutes.

No email required. No sales pitch. Just signal.

PMtheBuilder — Built for the PMs building with AI.